v 0.4.5 (hand-tracking)

The most important feature of this build (0.4.5) is hand-tracking support for Oculus Quest. The support is quite basic. Hands are tracked, there is nothing extra done with tracked information. No smoothing, predictions. This results in some jitteriness but for time being I don't want to get in the way of hand-tracking too much.

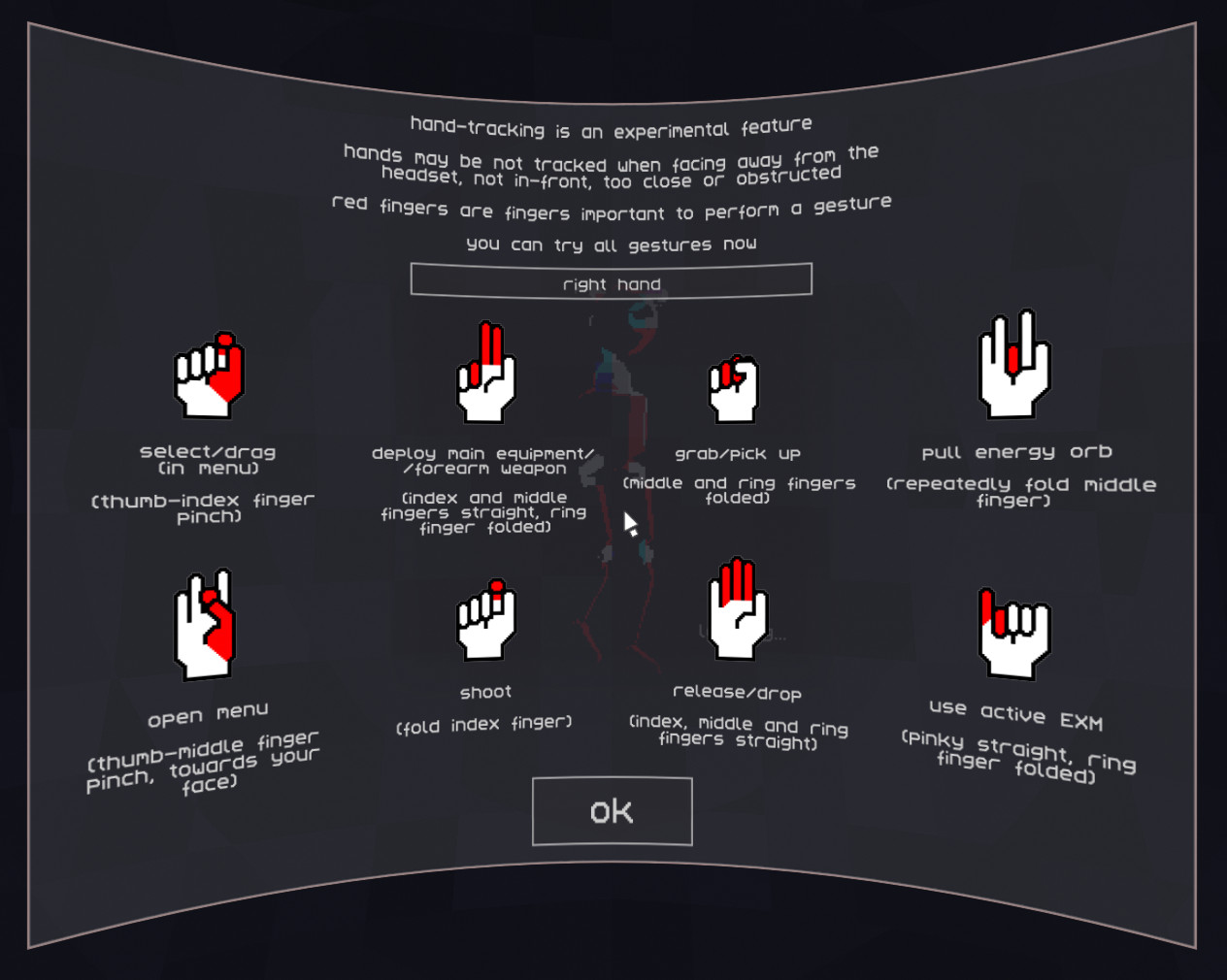

Simple gestures are handled. They are all described from within the game, so I won't be running here into details on how to perform them. Important thing is that I wanted gestures to be different but also that you could perform multiple gestures at the same time (like holding a gun, shooting, pulling energy orbs and using EXMs). I was quite happy with the result when I was just testing gestures themselves. But then I started to play the game and...

One important thing to mention here is that there is some issue when you move. I am aware of it. It will be fixed, just not right now. I haven't yet found the actual reason. I have a few suspects and I'll be trying to figure this out. It may take more time, though.

Well, I am amazed at how well it tracks your hands. As long as it has a view on them. If the view is not clear, a lot of issues may happen. The worst and the most common is that you lose any tracking info. If you try to aim with your arm stretched, most likely there will be no information about your fingers (because they're obstructed by your wrist and arm) and tracking is lost. But there are also other smaller issues like if fingers are obstructed (even by other fingers) they are put in their default pose. I had to change a few gestures to counter that but still, it's not great at the moment how it works in the game. There is another problem although it is a bit solvable. General jitteriness of tracking.

If you look at your tracked hand, you see it shaking, moving all the time. This isn't an issue if you just grab things, do simple gestures etc. But when you are holding a gun and trying to aim, the gun goes all over the place. And you can stretch your hand to make it easier because all tracking info is then lost.

I still want to keep it in the game, as it still works, especially when you remove all NPCs from the game. You can just walk around, push some buttons - there's not much of them right now, but more devices will be coming.

A few technical details.

Hands in the game are a bit different than real hands. That was done on purpose. I didn't anticipate hand-tracking. If I'd do the way described in SDK, I should either use provided hand model or remodel my hands. I didn't want to remodel my hands as I was not sure how hand-tracking will work. Couldn't just put bones as they are completely different. Using fingertips alone was also not working as fingers in both models have different length. What I did is that I still use fingertips data for IK but I alter it depending on how stretched fingers are. If they are stretched, I alter fingertip location to depend on actual mesh/skeleton data - longer fingers, starting bones are placed a bit differently. This works good enough.

The way I track gestures is that four fingers (index, middle, ring, pinky) can be in three normal states (folded, straight, neutral) plus one extra (pinch). Each gesture may require any combination of states for any fingers. I tried to use generous states (like "not folded" which means "neutral or straight") but with fingers jumping when there's no clear view on hand I had to rely on actual states.

There's still a lot of stuff that can be done to improve it but some issues won't be solved by the game (when no tracking info is provided) and I am not sure if those issues can be solved at all.

Still, hand-tracking can be used by other kinds of games. Shooters are not the best option, mostly due to jitteriness, although with some aim assist (Pistol Whip does that pretty well) it could be ok. Fighting games are out of question. If you hold your hands too close, no tracking info is provided. If you try to punch, your wrist obstructs fingers and system has no idea where your hand is. Again, no tracking info. I think that some magic related game, possibly non-combat, in which finger movement could affect how strong is a spell or add some features to it (example: arms waving required to summon a creature could be the same, but by doing it with fists, you could summon a golem, moving fingers quickly could result in a fire demon etc).

Oh, I finally plugged in Oculus Rift S (I also upgraded my computer a bit) and it works fine :( which means I still have to find a bug that prevents some Rift S from playing Tea For god.

Now I want to create a mini update, focused only on Apparatus Grid and EXMs (a result of some great feedback - if you have any problems, ideas, please feel free to contact me). And after that - tutorials!

Files

Get Tea For God

Tea For God

vr roguelite using impossible spaces / euclidean orbifold

| Status | In development |

| Author | void room |

| Genre | Shooter |

| Tags | euclidean-orbifold, impossible-spaces, Oculus Quest, Oculus Rift, Procedural Generation, Roguelite, Virtual Reality (VR) |

| Languages | English |

More posts

- performance of a custom engine on a standalone vr headsetMar 27, 2023

- "Beneath", health system and AI changesFeb 16, 2023

- vr anchors and elevatorsJan 16, 2023

- v 0.8.0 new difficulty setup, experience mode, new font, performance updateDec 15, 2022

- performanceDec 02, 2022

- getting ready for demo udpate, scourer improvementsNov 15, 2022

- new difficulty + insight upgradeOct 31, 2022

- release delayOct 20, 2022

- loading times and early optimisationOct 17, 2022

Comments

Log in with itch.io to leave a comment.

Finally got around to trying your new hand tracking. It's really wonderful. I found it rather striking how much freeing my hands from the controllers peeled one more layer of abstraction from the experience. Shooting and fighting were, of course, not as good as on controllers, and grabbing can be sticky, and the waist inventory may have to move for the purpose of camera angle. Still, I am probably going to be playing with hand tracking more often than not for now, as I typically play with passive enemies anyway, and button pushing feels particularly good.

Nice work, I always wondered how well hand tracking could be used in most games without movement inputs (outside of possibly teleportation) and forgot all about tea for god!

I don't have a quest myself but its awesome to see your implementation of it, hopefully finger tracking will get more attention if someone makes an open source version for PC (or valve packs it into steamVR) with so many headsets having 2 or more cameras on them now it could probably have some level of input on most devices.

The Vive/Pro do have a finger tracking sdk (https://developer.vive.com/resources/knowledgebase/vive-hand-tracking-sdk/) but I'm not sure anyone even knows it exists.

I kind of hope for PC version although I prefer it to be handled via SteamVR (or OculusSDK or WMR SDK) than just dealing with specific device's libraries.

There could be even gloves that use the same system. While gloves kind of contradict the whole idea (to have nothing in your hands), they could be much more precise and could not have as many problems as camera-based hand-tracking currently has. Of course those don't have to be gloves, it can be something that you just put on your hand - I think that I've seen something like that, I just can't remember the name.